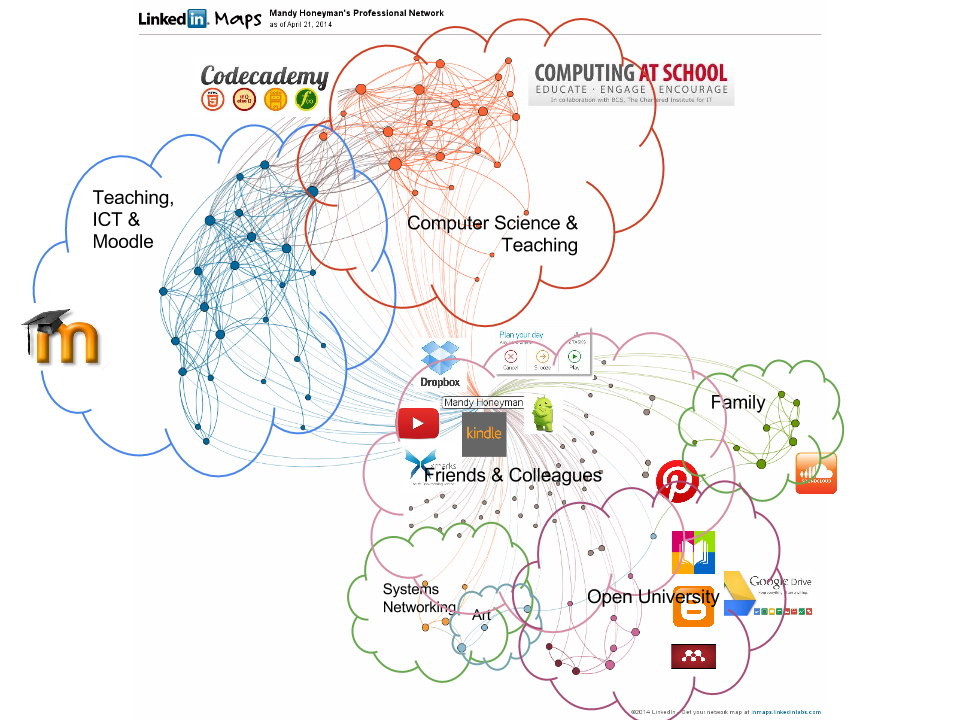

Personal learning environments (PLEs) are simply a collection of applications, websites and technologies that we use for studying. Because I also learn from people, I have included my personal learning network (PLN) incorporated with my PLE. It also changes all the time and this one was created a couple of years ago. I would make Twitter a much bigger part of it today if I were to redraw it and I would include Moodle too.

My PLE also includes things which I am not representing on this image, because they don’t have icons. For example I am typing on a Chromebook and this little notepad computer has become the place where I study most of the time. I don’t write assessments and I can’t use Mendeley on it, but for reading, making notes and searching it is great and very portable. But the most important thing for me is that I am not at my desk, if I were at my desk I would be worrying about work rather than working on my studies. So I do think that PLEs need to also include a sense of the physical environment as well as the technological one.

When I first did this exercise I looked at a lot of other people’s PLEs and saved their images to Pinterest. Pinterest then became more interesting as a space for keeping diagrams, images of other research topics – as well as a shopping wishlist!

Follow Mandy's board PLE on Pinterest.

So where are PLE headed?

The very nature of PLE are that they are fluid, the applications and technologies will change as our needs change. So at the moment I am using Twitter much more than I have done in the past. Partly this is because it is encouraged by the course I am taking (MA ODE) and many of the students are using the #H800 hashtag to support each other and share experiences. It does make me wonder though whether the use of Twitter is therefore not really part of my PLE at all for this module but has actually been usurped by the module team? However, because I still follow many other people who are constantly introducing me to interesting resources and material, I think I can be relaxed about this.

I have also moved from eBlogger to hosting this blog in my own WordPress environment. As I learn from reflecting and I am using my blog for reflecting it therefore also needs to be included in my PLE. I purposefully chose to keep my blog away from the OU’s hosting service for it (still part of Moodle), because I wanted to use my blog more openly and, in the end, of course am intending to attract an audience. I don’t think that happens via the university’s hosted blog service. I certainly hardly ever read any blogs that are there, but at the same time recognise that for students who don’t want to have to start their own account anywhere else, it is more convenient to satisfy course requirements by taking the simplest route.

Issues

Students should be free to make choices and work together. Whether these choices are free of influence is a different matter and probably one that will become more interesting to look at in the future. At the moment I think we are in a time of settling in. We are getting more used to incorporating different technologies into our learning as students and our teaching as teachers. It is only when those technologies are embedded that we will be able to really see the effect they have had. There is a tension between innovation and experimentation and being able to give students a good learning experience.

I learnt this the hard way when I used a beta version of AppInventor with some students on a GCSE project; unfortunately the hosting of this application was changed halfway through their project and this caused a few problems. I had assumed that something hosted by Google would be more stable – now I know better. Considering the stability of any technology being incorporated into an assessment has to be a priority. Although everything turned out okay in the end, it was unnecessarily stressful at the time. When I was teaching my approach was always to stay ahead of the curve with technologies and I think that my students appreciated that they were getting to try out new things and it often made the tasks they needed to do fresh and exciting. But sometimes, as is the way with all technologies, there were delays and frustrations too.

This shouldn’t, however, ever stop us from assessing new technologies in order to find fresh ways to approach learning and teaching.